As the end of 2009 approaches, I have many BraveNewClimate blog posts that are developing behind the scenes — more from the IFR FaD and TCASE series, a guest post by Tom Blees on the natural gas ‘game’, a guest post by a new BNC writer on wind farm planning problems, a report about my upcoming popular book on nuclear power (co-authored by Ian Lowe), and so on.

As the end of 2009 approaches, I have many BraveNewClimate blog posts that are developing behind the scenes — more from the IFR FaD and TCASE series, a guest post by Tom Blees on the natural gas ‘game’, a guest post by a new BNC writer on wind farm planning problems, a report about my upcoming popular book on nuclear power (co-authored by Ian Lowe), and so on.

One of the most interesting things on the immediate horizon is a simple analysis to compare six options for reducing CO2 emissions from Australia’s electricity generation over the period 2010 and 2050, by Peter Lang. Peter has written a number of important posts on likely wind and solar energy costs and carbon abatement potential, as these technologies are taken to a large scale (search for ‘Peter Lang” on this page for a listing).

For now though, I want to take a bit of space to reflect on the global temperature record. With 2009 ranking among the hottest years on record [final data pending] and 2010 looking likely to be the hottest ever, it’s worth understanding where these data come from and why climate scientists consider them to be so robust. (Incidentally, on my research front, Corey Bradshaw and I are currently working on a new systematic analysis of the Australian temperature station data, to better contextualise extreme heat wave events).

So, below, I reproduce “The Temperature of Science” by Jim Hansen (arguably the world’s most famous climate scientist and a fellow SCGI member). Jim has perhaps the best understanding of this topic of anyone I know. This is a post everyone who wishes to make a comment in this area ought to read. I’ll be interested in the opinions of regular BNC readers.

——————————————————————-

The Temperature of Science

James Hansen

Background

My experience with global temperature data over 30 years provides insight about how the science and its public perception have changed. In the late 1970s I became curious about well known analyses of global temperature change published by climatologist J. Murray Mitchell: why were his estimates for large-scale temperature change restricted to northern latitudes? As a planetary scientist, it seemed to me there were enough data points in the Southern Hemisphere to allow useful estimates both for that hemisphere and for the global average. So I requested a tape of meteorological station data from Roy Jenne of the National Center for Atmospheric Research, who obtained the data from records of the World Meteorological Organization, and I made my own analysis.

Fast forward to December 2009, when I gave a talk at the Progressive Forum in Houston Texas. The organizers there felt it necessary that I have a police escort between my hotel and the forum where I spoke. Days earlier bloggers reported that I was probably the hacker who broke into East Anglia computers and stole e-mails. Their rationale: I was not implicated in any of the pirated e-mails, so I must have eliminated incriminating messages before releasing the hacked emails. The next day another popular blog concluded that I deserved capital punishment. Web chatter on this topic, including indignation that I was coming to Texas, led to a police escort.

How did we devolve to this state? Any useful lessons? Is there still interesting science in analyses of surface temperature change? Why spend time on it, if other groups are also doing it?

First I describe the current monthly updates of global surface temperature at the Goddard Institute for Space Studies. Then I show graphs illustrating scientific inferences and issues. Finally I respond to questions in the above paragraph.

Current Updates

Each month we receive, electronically, data from three sources: weather data for several thousand meteorological stations, satellite observations of sea surface temperature, and Antarctic research station measurements. These three data sets are the input for a program that produces a global map of temperature anomalies relative to the mean for that month during the period of climatology, 1951-1980.

The analysis method has been described fully in a series of refereed papers (Hansen et al., 1981, 1987, 1999, 2001, 2006). Successive papers updated the data and in some cases made minor improvements to the analysis, for example, in adjustments to minimize urban effects. The analysis method works in terms of temperature anomalies, rather than absolute temperature, because anomalies present a smoother geographical field than temperature itself. For example, when New York City has an unusually cold winter, it is likely that Philadelphia is also colder than normal. The distance over which temperature anomalies are highly correlated is of the order of 1000 kilometers at middle and high latitudes, as we illustrated in our 1987 paper.

Although the three input data streams that we use are publicly available from the organizations that produce them, we began preserving the complete input data sets each month in April 2008. These data sets, which cover the full period of our analysis, 1880-present, are available to parties interested in performing their own analysis or checking our analysis. The computer program that performs our analysis is published on the GISS web site.

Responsibilities for our updates are as follows. Ken Lo runs programs to add in the new data and reruns the analysis with the expanded data. Reto Ruedy maintains the computer program that does the analysis and handles most technical inquiries about the analysis. Makiko Sato updates graphs and posts them on the web. I examine the temperature data monthly and write occasional discussions about global temperature change.

Scientific Inferences and Issues

Scientific Inferences and Issues

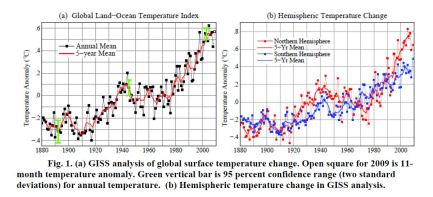

Temperature data – example of early inferences. Figure 1 shows the current GISS analysis of global annual-mean and 5-year running-mean temperature change (left) and the hemispheric temperature changes (right). These graphs are based on the data now available, including ship and satellite data for ocean regions.

Figure 1 illustrates, with a longer record, a principal conclusion of our first analysis of temperature change (Hansen et al., 1981). That analysis, based on data records through December 1978, concluded that data coverage was sufficient to estimate global temperature change. We also concluded that temperature change was qualitatively different in the two hemispheres. The Southern Hemisphere had more steady warming through the century while the Northern Hemisphere had distinct cooling between 1940 and 1975.

It required more than a year to publish the 1981 paper, which was submitted several times to Science and Nature. At issue were both the global significance of the data and the length of the paper. Later, in our 1987 paper, we proved quantitatively that the station coverage was sufficient for our conclusions – the proof being obtained by sampling (at the station locations) a 100-year data set of a global climate model that had realistic spatial-temporal variability.

The different hemispheric records in the mid-twentieth century have never been convincingly explained. The most likely explanation is atmospheric aerosols, fine particles in the air, produced by fossil fuel burning. Aerosol atmospheric lifetime is only several days, so fossil fuel aerosols were confined mainly to the Northern Hemisphere, where most fossil fuels were burned. Aerosols have a cooling effect that still today is estimated to counteract about half of the warming effect of human-made greenhouse gases. For the few decades after World War II, until the oil embargo in the 1970s, fossil fuel use expanded exponentially at more than 4%/year, likely causing the growth of aerosol climate forcing to exceed that of greenhouse gases in the Northern Hemisphere. However, there are no aerosol measurements to confirm that interpretation. If there were adequate understanding of the relation between fossil fuel burning and aerosol properties it would be possible to infer the aerosol properties in the past century. But such understanding requires global measurements of aerosols with sufficient detail to define their properties and their effect on clouds, a task that remains elusive, as described in chapter 4 of Hansen (2009).

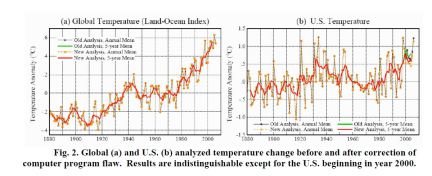

Flaws in temperature analysis. Figure 2 illustrates an error that developed in the GISS analysis when we introduced, in our 2001 paper, an improvement in the United States temperature record. The change consisted of using the newest USHCN (United States Historical Climatology Network) analysis for those U.S. stations that are part of the USHCN network. This improvement, developed by NOAA researchers, adjusted station records that included station moves or other discontinuities. Unfortunately, I made an error by failing to recognize that the station records we obtained electronically from NOAA each month, for these same stations, did not contain the adjustments. Thus there was a discontinuity in 2000 in the records of those stations, as the prior years contained the adjustment while later years did not.

Flaws in temperature analysis. Figure 2 illustrates an error that developed in the GISS analysis when we introduced, in our 2001 paper, an improvement in the United States temperature record. The change consisted of using the newest USHCN (United States Historical Climatology Network) analysis for those U.S. stations that are part of the USHCN network. This improvement, developed by NOAA researchers, adjusted station records that included station moves or other discontinuities. Unfortunately, I made an error by failing to recognize that the station records we obtained electronically from NOAA each month, for these same stations, did not contain the adjustments. Thus there was a discontinuity in 2000 in the records of those stations, as the prior years contained the adjustment while later years did not.

The error was readily corrected, once it was recognized. Figure 2 shows the global and U.S. temperatures with and without the error. The error averaged 0.15°C over the contiguous 48 states, but these states cover only 1½ percent of the globe, making the global error negligible.

However, the story was embellished and distributed to news outlets throughout the country. Resulting headline: NASA had cooked the temperature books – and once the error was corrected 1998 was no longer the warmest year in the record, instead being supplanted by 1934.

This was nonsense, of course. The small error in global temperature had no effect on the ranking of different years. The warmest year in our global temperature analysis was still 2005. Conceivably confusion between global and U.S. temperatures in these stories was inadvertent. But the estimate for the warmest year in the U.S. had not changed either. 1934 and 1998 were tied as the warmest year (Figure 2b) with any difference (~0.01°C) at least an order of magnitude smaller than the uncertainty in comparing temperatures in the 1930s with those in the 1990s.

The obvious misinformation in these stories, and the absence of any effort to correct the stories after we pointed out the misinformation, suggests that the aim may have been to create distrust or confusion in the minds of the public, rather than to transmit accurate information. That, of course, is a matter of opinion. I expressed my opinion in two e-mails that are on my Columbia University web site:

http://www.columbia.edu/~jeh1/mailings/2007/20070810_LightUpstairs.pdf

http://www.columbia.edu/~jeh1/mailings/2007/20070816_realdeal.pdf.

We thought we had learned the necessary lessons from this experience. We put our analysis program on the web. Everybody was free to check the program, if they were concerned that any data “cooking” may be occurring.

Unfortunately, another data problem occurred in 2008. In one of the three incoming data streams, the one for meteorological stations, the November 2008 data for many Russian stations was a repeat of October 2008 data. It was not our data record, but we properly had to accept the blame for the error, because the data was included in our analysis. Occasional flaws in input data are normal in any analysis, and the flaws are eventually noticed and corrected if they are substantial. Indeed, we have an effective working relationship with NOAA – when we spot data that appears questionable we inform the appropriate people at the National Climate Data Center – a relationship that has been scientifically productive.

This specific data flaw was a case in point. The quality control program that NOAA runs on the data from global meteorological stations includes a check for repetition of data: if two consecutive months have identical data the data is compared with that at the nearest stations. If it appears that the repetition is likely to be an error, the data is eliminated until the original data source has verified the data. The problem in 2008 escaped this quality check because a change in their program had temporarily, inadvertently, omitted that quality check.

The lesson learned here was that even a transient data error, however quickly corrected provides fodder for people who are interested in a public relations campaign, rather than science. That means we cannot put the new data each month on our web site and check it at our leisure, because, however briefly a flaw is displayed, it will be used to disinform the public. Indeed, in this specific case there was another round of “fraud” accusations on talk shows and other media all around the nation.

Another lesson learned. Subsequently, to minimize the chance of a bad data point slipping through in one of the data streams and temporarily affecting a publicly available data product, we now put the analyzed data up first on a site that is not visible to the public. This allows Reto, Makiko, Ken and me to examine maps and graphs of the data before the analysis is put on our web site – if anything seems questionable, we report it back to the data providers for them to resolve. Such checking is always done before publishing a paper, but now it seems to be necessary even for routine transitory data updates. This process can delay availability of our data analysis to users for up to several days, but that is a price that must be paid to minimize disinformation.

Is it possible to totally eliminate data flaws and disinformation? Of course not. The fact that the absence of incriminating statements in pirated e-mails is taken as evidence of wrongdoing provides a measure of what would be required to quell all criticism. I believe that the steps that we now take to assure data integrity are as much as is reasonable from the standpoint of the use of our time and resources.

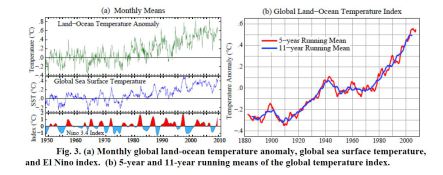

Temperature data – examples of continuing interest. Figure 3(a) is a graph that we use to help provide insight into recent climate fluctuations. It shows monthly global temperature anomalies and monthly sea surface temperature (SST) anomalies. The red-blue Nino3.4 index at the bottom is a measure of the Southern Oscillation, with red and blue showing the warm (El Nino) and cool (La Nina) phases of sea surface temperature oscillations for a small region in the eastern equatorial Pacific Ocean.

Temperature data – examples of continuing interest. Figure 3(a) is a graph that we use to help provide insight into recent climate fluctuations. It shows monthly global temperature anomalies and monthly sea surface temperature (SST) anomalies. The red-blue Nino3.4 index at the bottom is a measure of the Southern Oscillation, with red and blue showing the warm (El Nino) and cool (La Nina) phases of sea surface temperature oscillations for a small region in the eastern equatorial Pacific Ocean.

Strong correlation of global SST with the Nino index is obvious. Global land-ocean temperature is noisier than the SST, but correlation with the Nino index is also apparent for global temperature. On average, global temperature lags the Nino index by about 3 months.

During 2008 and 2009 I received many messages, sometimes several per day informing me that the Earth is headed into its next ice age. Some messages include graphs extrapolating cooling trends into the future. Some messages use foul language and demand my resignation. Of the messages that include any science, almost invariably the claim is made that the sun controls Earth’s climate, the sun is entering a long period of diminishing energy output, and the sun is the cause of the cooling trend.

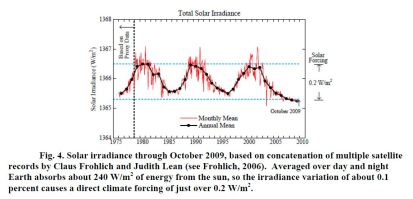

Indeed, it is likely that the sun is an important factor in climate variability. Figure 4 shows data on solar irradiance for the period of satellite measurements. We are presently in the deepest most prolonged solar minimum in the period of satellite data. It is uncertain whether the solar irradiance will rebound soon into a more-or-less normal solar cycle – or whether it might remain at a low level for decades, analogous to the Maunder Minimum, a period of few sunspots that may have been a principal cause of the Little Ice Age.

The direct climate forcing due to measured solar variability, about 0.2 W/m2, is comparable to the increase in carbon dioxide forcing that occurs in about seven years, using recent CO2 growth rates. Although there is a possibility that the solar forcing could be amplified by indirect effects, such as changes of atmospheric ozone, present understanding suggests only a small amplification, as discussed elsewhere (Hansen 2009). The global temperature record (Figure 1) has positive correlation with solar irradiance, with the amplitude of temperature variation being approximately consistent with the direct solar forcing. This topic will become clearer as the records become longer, but for that purpose it is important that the temperature record be as precise as possible.

The direct climate forcing due to measured solar variability, about 0.2 W/m2, is comparable to the increase in carbon dioxide forcing that occurs in about seven years, using recent CO2 growth rates. Although there is a possibility that the solar forcing could be amplified by indirect effects, such as changes of atmospheric ozone, present understanding suggests only a small amplification, as discussed elsewhere (Hansen 2009). The global temperature record (Figure 1) has positive correlation with solar irradiance, with the amplitude of temperature variation being approximately consistent with the direct solar forcing. This topic will become clearer as the records become longer, but for that purpose it is important that the temperature record be as precise as possible.

Frequently heard fallacies are that “global warming stopped in 1998” or “the world has been getting cooler over the past decade”. These statements appear to be wishful thinking – it would be nice if true, but that is not what the data show. True, the 1998 global temperature jumped far above the previous warmest year in the instrumental record, largely because 1998 was affected by the strongest El Nino of the century. Thus for the following several years the global temperature was lower than in 1998, as expected.

However, the 5-year and 11-year running mean global temperatures (Figure 3b) have continued to increase at nearly the same rate as in the past three decades. There is a slight downward tick at the end of the record, but even that may disappear if 2010 is a warm year. Indeed, given the continued growth of greenhouse gases and the underlying global warming trend (Figure 3b) there is a high likelihood, I would say greater than 50 percent, that 2010 will be the warmest year in the period of instrumental data. This prediction depends in part upon the continuation of the present moderate El Nino for at least several months, but that is likely.

Furthermore, the assertion that 1998 was the warmest year is based on the East Anglia – British Met Office temperature analysis. As shown in Figure 1, the GISS analysis has 2005 as the warmest year. As discussed by Hansen et al. (2006) the main difference between these analyses is probably due to the fact that British analysis excludes large areas in the Arctic and Antarctic where observations are sparse. The GISS analysis, which extrapolates temperature anomalies as far as 1200 km, has more complete coverage of the polar areas. The extrapolation introduces uncertainty, but there is independent information, including satellite infrared measurements and reduced Arctic sea ice cover, which supports the existence of substantial positive temperature anomalies in those regions.

In any case, issues such as these differences between our analyses provide a reason for having more than one global analysis. When the complete data sets are compared for the different analyses it should be possible to isolate the exact locations of differences and likely gain further insights.

Summary

The nature of messages that I receive from the public, and the fact that NASA Headquarters received more than 2500 inquiries in the past week about our possible “manipulation” of global temperature data, suggest that the concerns are more political than scientific. Perhaps the messages are intended as intimidation, expected to have a chilling effect on researchers in climate change.

The recent “success” of climate contrarians in using the pirated East Anglia e-mails to cast doubt on the reality of global warming* seems to have energized other deniers. I am now inundated with broad FOIA (Freedom of Information Act) requests for my correspondence, with substantial impact on my time and on others in my office. I believe these to be fishing expeditions, aimed at finding some statement(s), likely to be taken out of context, which they would attempt to use to discredit climate science.

There are lessons from our experience about care that must be taken with data before it is made publicly available. But there is too much interesting science to be done to allow intimidation tactics to reduce our scientific drive and output. We can take a lesson from my 5- year-old grandson who boldly says “I don’t quit, because I have never-give-up fighting spirit!” http://www.columbia.edu/~jeh1/mailings/2009/20091130_FightingSpirit.pdf

There are other researchers who work more extensively on global temperature analyses than we do – our main work concerns global satellite observations and global modeling – but there are differences in perspectives, which, I suggest, make it useful to have more than one analysis. Besides, it is useful to combine experience working with observed temperature together with our work on satellite data and climate models. This combination of interests is likely to help provide some insights into what is happening with global climate and information on the data that are needed to understand what is happening. So we will be keeping at it.

*By “success” I refer to their successful character assassination and swift-boating. My interpretation of the e-mails is that some scientists probably became exasperated and frustrated by contrarians – which may have contributed to some questionable judgment. The way science works, we must make readily available the input data that we use, so that others can verify our analyses. Also, in my opinion, it is a mistake to be too concerned about contrarian publications – some bad papers will slip through the peer-review process, but overall assessments by the National Academies, the IPCC, and scientific organizations sort the wheat from the chaff.

The important point is that nothing was found in the East Anglia e-mails altering the reality and magnitude of global warming in the instrumental record. The input data for global temperature analyses are widely available, on our web site and elsewhere. If those input data could be made to yield a significantly different global temperature change, contrarians would certainly have done that – but they have not.

References

Frölich, C. 2006: Solar irradiance variability since 1978. Space Science Rev., 248, 672-673.

Hansen, J., D. Johnson, A. Lacis, S. Lebedeff, P. Lee, D. Rind, and G. Russell, 1981: Climate impact of increasing atmospheric carbon dioxide. Science, 213, 957-966.

Hansen, J.E., and S. Lebedeff, 1987: Global trends of measured surface air temperature. J. Geophys. Res., 92, 13345-13372.

Hansen, J., R. Ruedy, J. Glascoe, and Mki. Sato, 1999: GISS analysis of surface temperature change. J. Geophys. Res., 104, 30997-31022.

Hansen, J.E., R. Ruedy, Mki. Sato, M. Imhoff, W. Lawrence, D. Easterling, T. Peterson, and T. Karl, 2001: A closer look at United States and global surface temperature change. J. Geophys. Res., 106, 23947-23963.

Hansen, J., Mki. Sato, R. Ruedy, K. Lo, D.W. Lea, and M. Medina-Elizade, 2006: Global temperature change. Proc. Natl. Acad. Sci., 103, 14288-14293.

Hansen, J. 2009: “Storms of My Grandchildren.” Bloomsbury USA, New York. (304 pp.)

Filed under: Clim Ch Q&A, Hot News, Sceptics

.png)

To John Kerry goes the most roundly quotable sound bite of Copenhagen:

“There isn’t a nation on the planet where the evidence of the impacts of climate change isn’t mounting. Frankly, those who look for any excuse to continue challenging the science have a fundamental responsibility which they have never fulfilled: Prove us wrong or stand down. Prove that the pollution we put in the atmosphere is not having the harmful effect we know it is. Tell us where the gases go and what they do. Pony up one single, cogent, legitimate, scholarly analysis. Prove that the ocean isn’t actually rising; prove that the ice caps aren’t melting, that deserts aren’t expanding. And prove that human beings have nothing to do with any of it. And by the way — good luck!”

According to

http://www.sustainablenuclear.org/PADs/pad0509till.html

“The anti-IFR forces were led by John Kerry”.

Has he come around finally? Why?

What do you think about this report from post-carbon.org, a leading sustainability group? Is 4th generation nuclear really as likely as clean coal i.e. not going to happen?

http://www.postcarbon.org/report/44377-searching-for-a-miracle

Lawrence: I think you will find John Kerry is still anti-IFR, he has just come out pro-carbon. I would like to turn the tables on his little speech and whilst agreeing with what he say’s (to a point) ask “prove human beings have “everything” to do with it. And by the way – good luck!”

2010 is going to be a hot year in terms of the level of debate. If the temps are cool the GW do-nothings will be emboldened. If it is a bad year for food production (in conjunction with fuel prices) household budgets could be strained. The deniers will fall mysteriously silent until their next opportunity.

In theory as of 1 July 2010 we should be paying $10 on (100-x)% of the tonnage of CO2 from dirty energy, with x% the fraction of free permits the industry gets after offsets. Thus the price of Hazelwood electricity could conceivably go up a whopping 1.4c per kwh before free permits.

July 2010 is also when the Olympic Dam expansion will be announced. Since Premier Rann invited the Copenhagen delegates back to SA to see how cleantech is done I wouldn’t hold your breath. Imagine this in 2010; a hot year, high food prices and obstacles to the adoption of both Gen III and Gen IV.

@ Rich – the report just rehearses the same tired shibboleths against nuclear energy that we have heard repeated ad nauseum and contains nothing new.

The waste ‘problem’ was solved to everyone’s satisfaction in other nuclear power countries long before it became a political issue in the States. Uranium is not running out and even if it was thorium can pick up the slack. Nuclear energy does not produce significant amounts of carbon at any point in the cycle, and what it does is minuscule compared to any other source on a per-quad basis. The costs and construction times of NPP compare favorably to any thermal power plant in those countries that lack antinuclear movements that can use political and legal harassment to inflict endless delays.

Most GenIV designs have had working prototypes at one time or another and could be brought to commercial fruition within a decade at most, should there be the political will to do so.

There is nothing in that report as far a nuclear energy goes that hasn’t been dismissed outright as many time as they come up. It’s getting tedious, one starts to wish they could come up with something fresh to complain about, so that we could give new answers.

I think the data on temperature since we launched satellites to monitor it are probably extremely good. The earlier data relating to thermometer readings is probably quite a bit more uncertain due to difficulties adjusting for the urban heat island effect, poor sample sizes, disputed adjustment methodologies and other factors. When it comes to the temperature record going back 1000-2000 years I think we are into territory that is quite speculative. In particular I think the elimination of the Medieval Warm Period from the historical record is very suspect. I’m not overly impressed by the proxy analysis. And I’m not convinced that we are living in the warmest period of the last 2000 years.

The concerns raised by climategate is much more to do with the reliablity of proxy data than the reliability of temperature data over the last century.

In terms of CO2 concentrations I am satisfied with the assertion that we have increased it and it is at a long term historical high. I think this is reasonable cause for some concern.

I oppose the Rudd government ETS. I suspect that Tony Abbott has no clue what to do. The Liberals get some points for notionally supporting nuclear. However neither of them will win my vote on the basis of this issue. I’ll probably just vote for lower taxes, whoever offers them.

I would be very supportive of a carbon tax targeted narrowly at electricity production and transport if it was revenue neutral (ie other taxes were cut proportionally). However neither the Liberals or the Labor party is offering this.

p.s. Somebody ought to be doing some polling to figure out what it is about nuclear power that most bothers the public.

Is it the risk of an accident.

Is it the long term nuclear waste management.

Is it nuclear weapons proliferation risks.

In all cases the depth and breadth of opposition needs to be measured. For instance whilst it might be interesting to know the answer to the above questions for the general public it is probably more interesting to know the answers given by those that are only modestly against nuclear. In other words what information does it take to change the mind of those that are prone to changing their minds.

I don’t know how a pro-nuclear campaign can be effectively targeted until public opinion is properly qualified in this manner.

“p.s. Somebody ought to be doing some polling to figure out what it is about nuclear power that most bothers the public.”

Tends to vary geographically and demographically between those three you mentioned and radiation from the facility itself making up the big four. Ultimately all need to be dismissed, however the best strategy in my opinion is to push the advatages rather than try and answer the critics.

All of there fears are artificial constructs of the antinuclear movement, and because of this they control where the goalposts are in these arguments and there is little hope that we can outflank them on these issues. The only thing we can do to counter these is to keep underlining the fact that other modes of generation are worse in terms of the same issues, or incapable of assuming the load.

But a full-frontal attack on the standard antinuclear talking points, plays right into their hands.

Really good idea to discuss why people don’t accept nuclear and from that figure out how to change their minds.

The ‘us’ and ‘them’ stance with those who don’t agree about the benefits of nuclear is non productive. Dialogue and debate is better unless people here think they are as bad as climate change deniers.

I’m saying this is because of the undertstated remark by Jim Hansen at the beginning of his essay that he needed a police escort to address that forum in Texas. He wasn’t receiving police protection from anti nuclear people. Likewise I am fairly confident that it wasn’t anti nuclear people who hacked CRU.

So don’t forget who are the ones doing the damage. The dangerous ones will use fighting and arguments between groups such as pro and anti nuc to their advantage.

And if I haven’t convinced you not think of anti nuc people as scum then remember the greenies were participating in a lot of the heavy lifting on alerting people to AGW long before you or I were.

Meantime I’m looking forward to Barry and Ian Lowe’s book (when is it published Barry?). We should work to get that, Tom Bless stuff, et al out to as many people as possible.

And finally just two tips if you find yourself addressing a public gathering on the benefits of advanced nuclear (e.g. Barry’s book of Tom’s material might give the opportunity):

1. don’t go into a spiel whining and complaining about anti nuclear stances or people no matter how provoked.

2. Shave the beard off…..no ‘buts’ shave it.

You have to be careful who you try and debate with. The leadership in the antinuke movement has it down to a science, they have been at it for a long time and they have stock replies for everything and are generally more skilled at demagoguery and rhetoric than we are. In front of a crowd they will eat you alive if you’ve never fought in that type of arena before.

We need to pass those types over an get our message directly to the public. It is only from the public demanding that more attention be paid to nuclear energy that we can beat the real enemy which is Big Carbon and its deep pockets. That is because there is only one thing more valuable to a politician than money: votes, and if they are made to believe that those are at risk or can be had based on their support of nuclear energy – they will back it. Without that we can scream ourselves hoarse at the antinukes and nothing will change.

Dialogue and debate is better unless people here think they are as bad as climate change deniers.

They are. Continued opposition to civilian nuclear power at this point is well nigh criminal. It would not be inappropriate to charge anti-nuclear leaders with crimes against humanity.

And if I haven’t convinced you not think of anti nuc people as scum then remember the greenies were participating in a lot of the heavy lifting on alerting people to AGW long before you or I were.

The early successes of the anti-nukes led to the continuation and expansion of coal power (by open and deliberate policy in some cases, such as Lovins), and I believe that some of the earliest figures alerting the world to the possibility of AGW were nuclear scientists such as Weinberg.

Shave the beard off…..no ‘buts’ shave it.

I’m not going to be complying with that advice, but I’m interested in why you think it important.

“Shave the beard off…..no ‘buts’ shave it.

I’m not going to be complying with that advice, but I’m interested in why you think it important.”

Lets just say as an engineer I’ve had years to observe how my fellow engineers come across and why scientists wipe the floor with them in the public relations stakes.

You need different messages for different audiences. At a shop in Leederville you can buy a packet of 10 different beards and moustaches – perfect for all occasions from discussions at the men-only exclusive clubs to the local primary school or supermarket, through to TV debates.

Personally I think Hugo Chavez’ comment on Copenhagen was better than John Kerry’s:

“What a pity that global warming isn’t caused by Wall St banks. We’d find the money immediately”.

Hey people – we are way off topic – remember this thread is about the evidence for increased temperatures (and the appalling attacks on scientists) not the nuclear/anti-nuclear argument. We have been through all this rhetoric on other threads. Anyone got a comment pertinent to the present subject?

What appalling attacks on scientists?

Gordon, you said:

That one is tritely easy. We know with extremely high confidence that the additional CO2 in the atmosphere was put there by use, due to isotopic signatures. we know that CO2 is a potent heat trapping gas, we know that the planet is visibly warming, we are unable to identify any other possible causes of the increase. While “everything” is not scientifically legitimate, no one claims 100.00% certainty, we do know with better than 90% certainty that this is the probable explanation.

So we come back to Kerry’s quote. do you have anything really to say against it, can you meet his challenge? Do you think the risk analysis would suggest we should do nothing until >90% certainty goes to >99% certainty?

TerjeP

“What appalling attacks on scientists?”

“Attacks” meaning verbal attacks – but you’re right – I should have said appalling threats of attack on scientists and their families.

I have a feeling Terje means that he thinks the attacks are justified, not appalling, as opposed to being worried about physical attacks.

@ Wilful

“we know that CO2 is a potent heat trapping gas, we know that the planet is visibly warming, we are unable to identify any other possible causes of the increase. While “everything” is not scientifically legitimate, no one claims 100.00% certainty, we do know with better than 90% certainty that this is the probable explanation.”

I am sorry, but being unable to identify any other possible cause… CO2 is a potent heat trapping gas? Water vapor? Sun? Cyclical changes in ocean biology? Can you back that up please?

I still don’t understand how a change from 250 parts per million to 360 parts per million can cause a large change in temperature. I have taken basic chemistry, physics, and don’t have an axe to grind here. On the other hand, I am very cautious about solutions that force the cost of energy up and transfer wealth from the poorest to governments. Especially when it is sold as a crisis that needs to put us back in the stone age.. or before.

I am very very suspicious of the article that constantly claims that “deniers” are simply political while the “science” is clear. The measurements he uses for temps in the southern hemisphere cover up to 1200 miles. I find that simply incredible. What are the potential errors in that?

I guess I am willing to listen to the science but I am wanting to make sure that those who have different points of view are clearly heard. Not shut out of the process. All I hear is correlations – CO2 and Temps our rising – it’s our fault. I actually hear very little science. “A rise of this % in CO2 levels in this volume of air will absorb X joules”

Show me the science.

Finally, it is sad to see someone harp about the politics of people opposing a position rather than the politics of those who want to take advantage of the “science.”

David it can’t… that is why the group 350.org set a target of 350ppm. it is where we are headed that is the problem… 450 and beyond.

Ms.Perps – the scientist in question are big kids. They have demonstrated ample ability to rubbish the reputation of others through deceit and concealment. If you play dirty in the big sandpit for year after year then you shouldn’t be too surprised if somebody gives you a bit of a kick when you fall over. Having said that the guys at sites such as climate audit have been remarkably civilised in their “attack”.

p.s. As for physical threats of violence I don’t support or condone those.

John Humphreys is always worth a read when the media decide to publish him:-

http://www.smh.com.au/opinion/politics/activists-should-stop-talking-about-global-warming-and-start-acting-20091222-lbpp.html?posted=sucessful

It seems to have confused Andrew Bolt.

http://blogs.news.com.au/heraldsun/andrewbolt/index.php/heraldsun/comments/greens_dont_do_sweat/

Wilful:

I like Prof Karoly’s current stand: If we reduce CO2 right now then we have a 50:50 chance of averting Climate Change. Whilst the Prof sounds like a fence sitter would it be better off to just prepare for Climate Change….as thoughout history it has?

@ Gordon,

Preparing for climate change… Well, if the rise is CO2 is NOT capable of increasing temperatures …. or if the rise is due to Pinatubo, Mayon, or Mt St Helens to name a few. Changing our use of CO2 will not effect any change in climate.

What I am seeing is not an attempt to change our use of CO2 but an attempt to control our lives through micromangement and taxes. This is the reason that so many on this article are mentioning the use of Nuclear power. Just this month the Environmental Protection Agency listed human produced CO2 as harmful to human health.

Sooo…. Replacing Coal burning electricity? Why we need Natural Gas!! (Still emitting CO2) Cap and Trade? Why now! You get to pay your neighbor so you have the privilege of breathing. You CO2 emitter you..

If the solutions being offered were closer to the actual stated problem I would be more likely to listen.

I was deeply impressed with a reply by Kirk Sorensen to a comment. He took the time – in a few lines – to show the case for thorium being more energy dense than a barrel of oil so that you can get about 100 times the energy out of the same volume of rock.

https://www.blogger.com/comment.g?blogID=26752655&postID=8134505749075759084&isPopup=true

This was impressive, concise and to the point with clear numbers backing up his statement. THAT’s what I am looking for. Show me the numbers.

Frankly, temperature readings that are adjusted to cover 1200 mile radius or so don’t impress me. Especially when these numbers are being used for political reasons. Hanson is complaining that posting raw numbers brings complaints. He wants a few days to take out the errors first. But how do we know these are errors? If all we see are adjusted data how can we ever cross check what he is concluding?

He probably is an honest man and a good scientist. However, in this climate it is best to take the slings and arrows and demonstrate your conclusion.

TerjeP

It is exactly the physical threats of violence that I am concerned about – especially those in the USA where anyone can, and often does, own a gun.

I am sure that most scientists can, and do, shrug off any attacks on their scientific credibility or persona – as you point out they are big boys and girls and used to the rough and tumble of the scientific fraternity.

Cowards who anonymously threaten harm to anyone they disagree with are beyond contempt!

Ms Perps,

Are you seriously worried about physical threats of violence? Because people own guns?

The people who are frustrated at this are worried about their freedom. They are not blindly striking out, but are totally frustrated at a manipulation of their lives in the name of “science.” The hyperbole in the letters is retorical. Some real guestures of openness would go a long way toward calming things down.

1. Rebuke the people who are producing movies and articles saying that the world is going to end in 5 years unless we all return to the stone age.

2. Publish the raw data, including the source code for computer models.

3. Allow descent in peer reviewed journals. And Wikipedia.

There are more but these would go a long way toward establishing confidence in the general public toward the science used.

The delusion that there are political solutions to every problem is a reality of our modern society.

The delusion that a modern lifestyle will destroy us is being strongly propagated by most segments of political life and news media. Media lives by promoting fear, politicians live by seeming to solve problems. In this context scientists have the capacity to feed the fear or to rebuke it.

David

Threatening to kill people is hardly “hyperbole” – in fact it is illegal to do so- at least it is in Australia. I am saying that the easy availability of firearms in the USA makes it much more possible for the death threats to be carried out – and at a distance too. I, and most Australians, do not consider that bearing arms should be a “right” as is the case in America. I think our lower violent death rates, particularly by gunshot wounds, vindicate that position. How many massacres do you need in the US before reforms occur? The last one in Australia, at Port Arthur , was the final straw that brought about a bi-lateral political agreement to severly limit gun ownership.

Ms Perps

Thank you so much for your deep concern.

If it was illegal in the USA to say someone should be killed much of the American left would be in Jail after the last presidency. It was fairly common for someone to suggest that George Bush should be killed.

I firmly believe that bearing arms is a right. Both to defend your person and to quell the tendency toward tyranny. Freedom is more valuable than life. As Patrick Henry said “Give me liberty or give me death!” The question of history is not how tyranny arose – it is common, the question is how did liberty arise?

The massacres in the USA in the past few years have been tragic. However, after 200 years of the right to bear arms, why now is there a spate of killings, and not just in the USA? For over 200 years we did not have this kind of killings. There is a different and deeper reason for this than the simple possession of weapons. Banning weapons will not remove that reason.

It is also recent that people make a past-time of saying they will kill someone they disagree with. I am deeply saddened by this. For most it is hyperbole. A horrible way to deal with people but mostly just words. I am praying for my nation to change. May God give us a great awakening as he has in the past. I trust in the God who is with us – Jesus Christ – to transform people – not politics.

Have a Joyful Christmas!

David – you are entitled to your opinion. However, I must point out that both Australia and Great Britain are democratic countries where the citizens live in freedom, without any tyranny, and without the necessity to have a gun in every home, or on one’s person. Indeed in England even the general police force is unarmed and a special armed group can only be called upon as a last resort.

The number of individuals, both criminal and innocent bystanders, killed in the US each weeks greatly exceeds by orders of magnitude, those killed in Australia or the UK. When a person is shot by police(or illegally armed civilians) in those countries it makes news and warrants an investigation into the circumstances while police are stood down. So commonplace is it in the US that I doubt anyone knows or cares.

Trust in God and Jesus Christ by all means but if you do, why would you need to bear arms anyway? Jesus Christ preached peace, turn the other cheek and love thy neighbour – not make sure you have a right to a gun so you can impose your will on others if God and Jesus let you down.

Oh – and I reckon much of the Right in America would be in prison too for threatening behaviour – Klu Klutz Klan members to name just one group! I suspect the negroes didn’t have guns to defend against, and save themselves from the tyranny, of those who did. Total HYPOCRISY David!

I’m inclinded to agree with David on this. I’d probably cite New Zealand and Switzerland as counter points to those made by Ms Perps. I’d happily go head to head on the Port Arthur issue and the irrationality of the subsequent ban on semi-automatics. However do we really want to go that far off topic? I’d suggest not. I don’t think a gun control debate is what Barry intended when he created this article. Perhaps we should all take a deep breath and let it pass.

Ms Perps

“Trust in God and Jesus Christ by all means but if you do, why would you need to bear arms anyway? Jesus Christ preached peace, turn the other cheek and love thy neighbour – not make sure you have a right to a gun so you can impose your will on others if God and Jesus let you down.”

When the life in danger is my own, I can turn the other cheek and even die if necessary. When it is your life in danger, I have a duty to defend you, with my own life if necessary. This is an imposition of my will on others to the degree that I refuse to let them harm you. I hold your life sacred.

“Oh – and I reckon much of the Right in America would be in prison too for threatening behaviour – Klu Klutz Klan members to name just one group! I suspect the negroes didn’t have guns to defend against, and save themselves from the tyranny, of those who did. Total HYPOCRISY David!”

No, not hypocrisy, just a note of reality that people of all political persuasions in the USA use threats as a verbal attack. Including the left. While I wish this were not the case, I recognize that it is a verbal tactic and not a planned action.

The actions of the KKK have been roundly rebuked in the USA. You have no idea what my life experience has been or the price I have paid for friendship with Blacks. I wish that the Negroes of that time were able to defend themselves. Guns would have been a mercy for them at that time. They were kept in fear and subjugation through intimidation. Horribly wrong and unjust. If they had been armed would the KKK have been so free in lynching them? I think not.

When faced with a person determined to kill you – defense is a reasonable response and a Christian one.

In the context of this blog, scientists need to exercise a conversational defense. As I suggested above. The best defense for a scientist is openness, transparency and the willingness to allow contrary voices. Until I can see true openness in peer reviewed journals, I will doubt the motives of those publishing.

Thanks for an interesting conversation and for challenging me. It is fun to think through how to respond clearly. Have a great day!

@David: you write: “The best defense for a scientist is openness, transparency and the willingness to allow contrary voices. Until I can see true openness in peer reviewed journals, I will doubt the motives of those publishing.”

No, Benedict Arnold, you omit the prerequisite for such scientists to debate against opponents of goodwill who share a respect for scientific method, so as to bring Science forward. But your ilk has neither goodwill nor any science. Because statistically, climate change denier criminals are white US Republican voters earning above-average money and “M16-frantic” to deny what is going to happen to their intra-US stock portfolios as the isotherms move steadily north up the USA (do you know what an isotherm is? do you begin to understand even a popular-style climate blog? )

Never mind your attempts at Protestant Biblical Revelation to us all on this blog, it is your spelling, NRA gunlover spin and obscurantism which place you firmly as heir to the US Know Nothing tradition of the 1840s. You have merely replaced 1840s immigrant Catholics to the USA with empirical scientists as your enemies, that’s all.

As a Monckton-Bellamy-McIntyre worshipper (you forget the Biblical injunction: “I am the Lord thy God, thou shalt have no false gods before me…”), you have demonstrably no idea about what peer-review in science means.

And releasing raw data to elements like you who are deeply hostile towards empirical facts merely allows your kind to lie, twist and fake in US media articles placed through the PR agencies paid for by your fossil fuel lobby paymasters. There are at least 3 books by US authors detailing this already.

Raw data given to liars and benders wastes invaluable climatologist research time in having to deal subsequently with people like you who, far from even rearranging the deckchairs on the Titanic, keep shouting aggressively that there is no iceberg anyway.

So check out either the Real Climate website or the current issue of New Scientist, http://www.newscientist.com for evidence of the data forgery, data distortion and lies on which you rest your risible “case”, dishonouring the noble word “sceptic”.

In conclusion, get up to Alaska to check out the dwindling ice and talk to a few Inuit. On second thoughts, don’t: your presumed heroine S. Palin is up there.

Agreed – this is not the place for a gun control argument. I am breathing deeply and letting it pass:)

@ Peter

Wow – That was intense. The prejudice is amazing. You have no idea who I am or where I live or what color I am. O kung suplado o mabait ako. Do you always impugn the color, religion and motives of everyone you have a discussion with? What gives you the idea that I am protestant? Or own a gun?

Do you have any idea how many ways that culture impacts communication? This was my simple point. I thought it was a common one. I am not denying scientific information. I am not wealthy. You have no idea of my income level.

I watched people not able to purchase rice last year when the price of fuel raised to the point where the poorest people I knew were not able to purchase food for their families. High fuel / energy costs impact the poorest people in the most poverty ridden countries. It was a heart break for me to watch. Friends of mine lost their small family businesses making about $500 dollars a month for a family of 9. The flour was too expensive to keep making donuts to sell.

So, am I concerned that we get the science right? YES! Am I deeply concerned that we are rushing solutions that have the deliberate aim of raising the costs of energy. Yes I am deeply worried.

You see, I don’t want my friends to die at the altar of AWG.

I don’t know who “As a Monckton-Bellamy-McIntyre worshipper” is. I wont take the time for a google search either.

Denier? Just don’t kill my friends to save the planet.

I am wondering if you know what a LFTR is?

Say what? This is the same Ian Lowe who wrote the Quarterly Essay Reaction Time: Climate Change and the Nuclear Option saying, amongst other things, “Promoting nuclear power as the solution to climate change is like advocating smoking as a cure for obesity. That is, taking up the nuclear option will make it much more difficult to move to the sort of sustainable, ecologically healthy future that should be our goal”?

Should be interesting. I can’t wait.

It’s a debate book… More details soon.

[…] […]